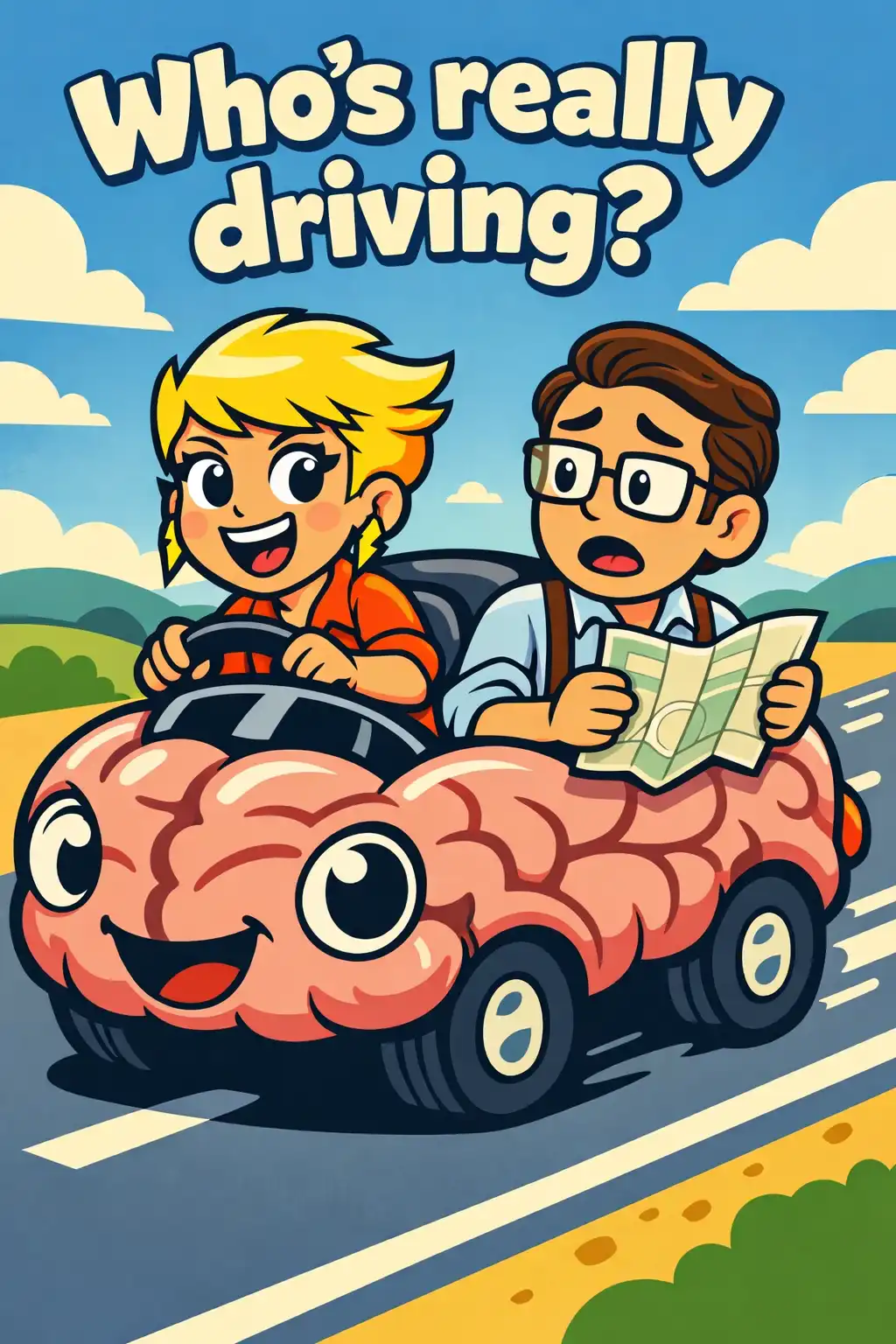

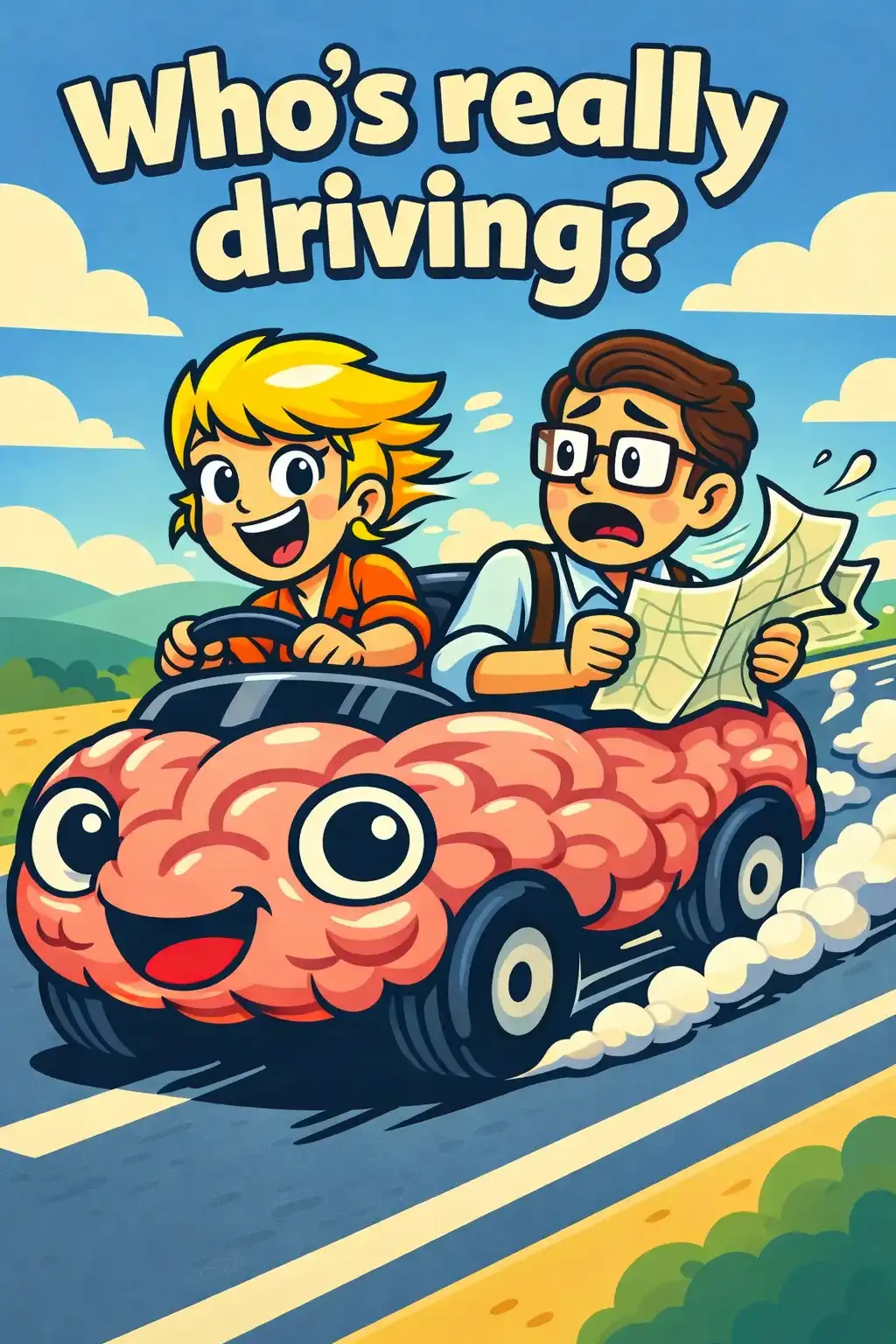

Lesson 1: Two systems run your mind

Imagine glancing at someone's face and instantly knowing they're angry. You didn't analyze anything. You didn't run any calculations. You just knew. That's what Kahneman calls System 1.

Now try solving seventeen times twenty-four in your head. Feel that effort? That slow, deliberate concentration is System 2 at work.

Kahneman spent decades studying human judgment alongside his colleague Amos Tversky, a brilliant Israeli psychologist. Together, their research changed how we understand the mind.

They discovered that we rely on mental shortcuts called heuristics. These shortcuts are usually helpful, but they can also lead to predictable errors, which they called biases.

Here's the key insight. System 1 is fast, automatic, and always running in the background. System 2 is slow, effortful, and surprisingly lazy.

We like to believe System 2 is in charge. But most of the time, System 1 is quietly making our decisions, and System 2 just nods along without questioning them.